“You’re Just Not My Type (of error)”

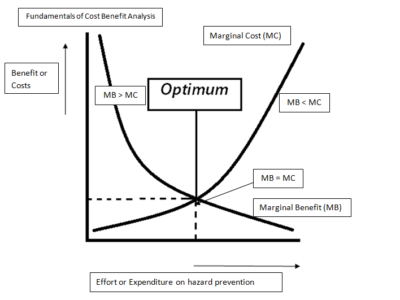

Most people find statistics off-putting — who wants to look at a bunch of numbers? And Statistics courses, which are required for students in many majors, are usually viewed as a painful box to check. But when you put aside the numbers and the technicalities, statisticians also have some simple yet powerful concepts. One of them is the distinction between Type 1 and Type 2 errors. I guess you can tell from the less-than-gripping labels why many students don’t find the statistics course enticing. But if more people understood the distinction, it would help improve public debate on a lot of issues.

It’s probably easiest to explain the types of errors using a criminal trial as an example. A Type 1 error is a false positive — the risk of mistakenly finding an innocent person guilty. In contrast, a Type 2 error is a false negative — the risk of mistakenly acquitting a guilty person. Here are some regulatory examples:

Climate change. Type 1 error is the risk of concluding that human beings caused climate change when they didn’t. A Type 2 error is the risk of rejecting this conclusion even though it’s true.

Chemicals. It’s a Type 1 error to incorrectly identify a chemical as a carcinogen, while it’s a Type 2 error to miss an actual carcinogen.

Endangered species. Type 1 error: mistakenly finding a species to be endangered. Type 2: overlooking an endangered species.

Ebola. It’s a Type 1 error to quarantine someone who doesn’t have Ebola; a Type 2 error is missing a contagious Ebola case.

The key point is that there’s a tradeoff: the more you try to decrease the chance of a Type 1 error, the more you increase the change of a Type 2 error. For instance, the more safeguards you put in place to prevent conviction of the innocent, the greater the chance that the guilty will escape punishment. The reverse is also true: you can eliminate safeguards to be sure that you convict the guilty, but then you’re also more likely to convict the innocent.

So here’s the point: People tend to focus on one kind of error in a particular situation and not on the other. Thus, some people are so anxious to avoid the risk of incorrectly accepting the finding of climate scientists (Type 1 error) that they overlook the risk of wrongly rejecting those findings (Type 2 error). On the other hand, many people are so worried about overlooking a possible case of Ebola (Type 2 error) that they overlook the risk of imposing quarantines that aren’t needed (Type 1 error). The two need to be kept in balance.

So don’t just focus on one kind of error without thinking about the other. It pays to consider both.

Reader Comments

3 Replies to ““You’re Just Not My Type (of error)””

Comments are closed.

You wrote: “The reverse is also true: you can eliminate safeguards to be sure that you convict the guilty, but then you’re also more likely to convict the guilty.”

Please elucidate. Thank you.

Now that is a specious argument: trying to marshal emotional support for a conclusion by stirring up fear of reaching a wrong conclusion. Not a scientific argument, that is for sure.

The implication of the argument is that it is obviously more dangerous to disagree with [self-styled] “climate scientists,” who offer their opinion that human action is leading to climate catastrophe, than it is to agree with them.

The argument is filled with so many bad assumptions, but what can you expect from a law professor? If the writer were a serious thinker, he might describe the alternatives he assumes are obvious, but then we could see whether he had even considered the consequences of either choice.

One major unjustified assumption he makes is that we are really faced with a choice as to whether “statistics” can provide the answer to what is driving global climate change (however that might be defined), and in what direction, and at what pace. Why assume that? Why not assume a witch doctor can predict climate change? There is no track record for either.

Another whole set of unstated assumptions involves the idea that we can productively intervene and control climate change, and that we can do so without causing greater problems than those that some people envision.

If human action is going to be controlled in an effort to control global climate, then individual liberty and property rights are going to have to be severely curtailed in those countries where such rights currently exist. Placing the future of the globe in the hands of international politicians and bureaucrats, who will be guided by [the correct group of] “climate scientists,” and who will attempt to implement their climate control scheme, is a lot more frightening to me than facing climate change without their interference or involvement. If drastic climate change does occur, I would look to free thinking and resourceful individuals and private companies for solutions, not to government bureaucrats and their “scientific” advisers and to cops and soldiers who will enforce government edicts.

It is not surprising to hear arguments from “philosopher kings” to persuade the public to accept the need for Plato’s Republic. Accepting such arguments could lead to real catastrophe.

Check out this picture for a pithy explanation: http://2378nh2nfow32gm3mb25krmuyy.wpengine.netdna-cdn.com/wp-content/uploads/2014/05/Type-I-and-II-errors1-625×468.jpg